Today (21st May) is Global Accessibility Awareness Day

Within Student Journey, we have a specialised Accessibility and Wellbeing Team who work with students throughout the year. The Team includes over 50 non-medical helper staff who provide 1:1 mentoring or study skills support for students with a disability or learning difference.

Last year they supported over 800 of our students – the number of students they support has increased by 34% in the last five years.

Here’s what our students have been saying about the team this year:

…incredibly invaluable, allowing me to… remain in university through the several challenges that have occurred during my course.

…really appreciate …[having]…continued support throughout our entire degree from the same people (as opposed to them changing each year). I am autistic and that makes a real difference for me.

[The support worker] has single handily been the most important person in my university experience… without the services I would have less routine and interest from others which have been the two most vital components in my success in university.

We think that this student sums up the service perfectly: “Amazing…Absolutely brilliant…Fantastic…Phenomenal…Invaluable…Top tier”

But it’s not only these staff who are making sure that what we do is as accessible as possible. Here are some of the things that our other teams do.

Blackboard Content

Blackboard Ally is available for all students and staff at the university. This year 59,541 documents have been downloaded in an alternative format by 3,894 users. The most common format is Tagged PDF.

Staff have made 3,417 fixes to content – that’s 3,417 changes that make teaching materials more accessible to use.

The average Ally course score for 2025-26 courses in Blackboard is 72%.

In November 2025, AU entered the Blackboard Fix Your Content Day and were placed 3rd in the UK for the number of fixes to Blackboard content made.

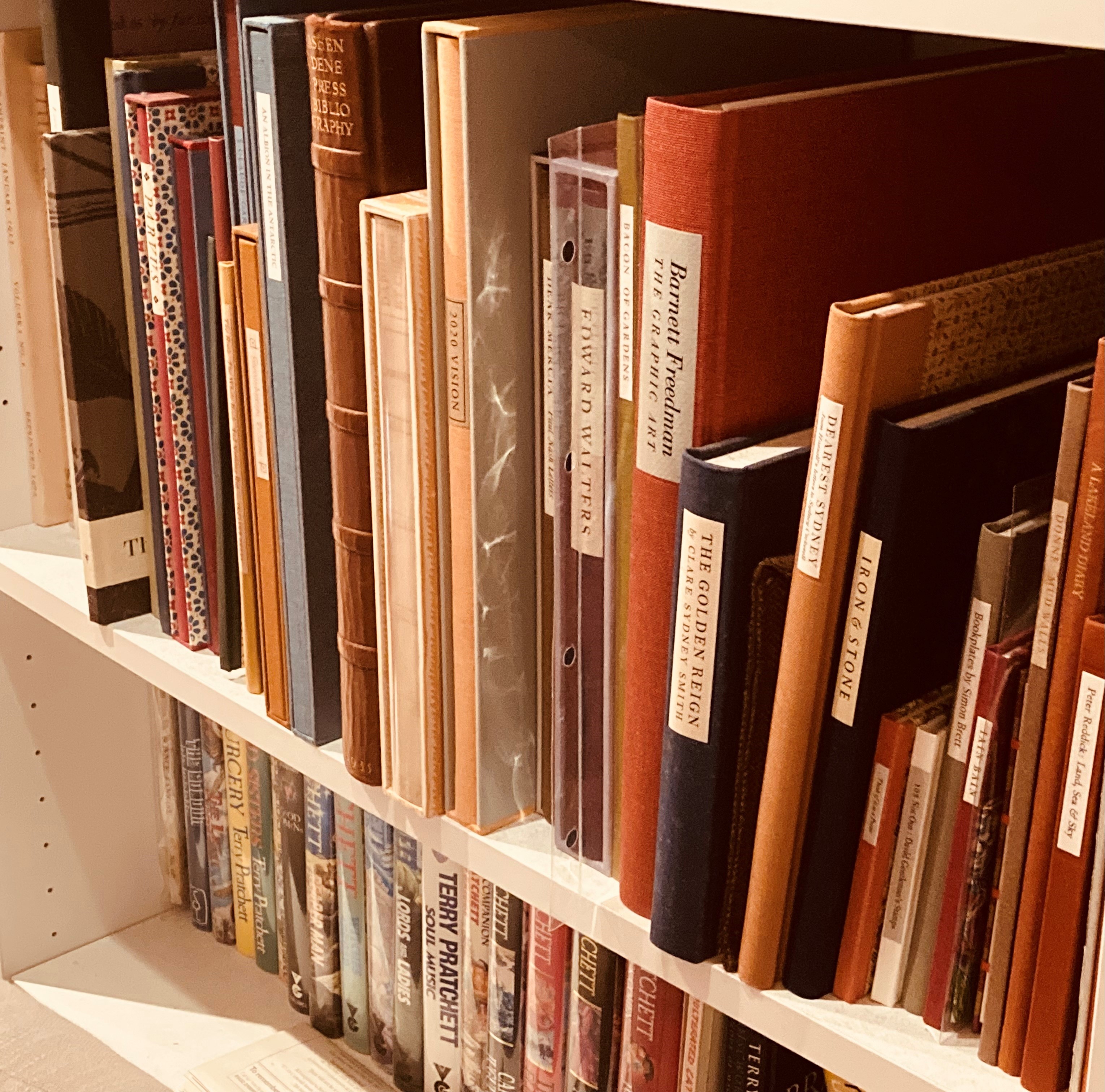

Books and journal articles

All the book chapter and journal article scans that our digitisation service for reading lists are in an accessible format. They use OCR (Optical Character Recognition) scanning, which means that uploaded scans of book chapters and journal articles in reading lists are fully machine-readable, searchable, and accessible content. As well as complying with legislation, these scans are accessible for all learners. OCR scanned documents are compatible with assistive technologies such as screen readers, as well as having text navigation facilities.

As well as scanning items for reading lists, the team can also create accessible copies for books for students who have declared a disability to the university. We have a licence which allows us to make or find accessible copies of books if a suitable version is not available for us to purchase. If a text is not covered by the licence but we own an original copy, we may still produce an accessible copy for personal study or research.

This service is free of charge to eligible students and can be accessed by emailing digitisation@aber.ac.uk

Sensory items for wellbeing sessions

Staff in our Wellbeing Service introduced boxes of sensory items that can be offered to students in Wellbeing sessions to help them manage need for self-stimulation (stimming). Here’s an example of the items available:

Using AI

Our new AI prompt library which is available to everyone include information about using AI for users with accessibility requirements. You can see some examples of this in some samples from the prompt library

- Plain Language Rewriting

“Rewrite the following text in plain, easy to understand language while keeping the original meaning. Break complex sentences into shorter steps and remove unnecessary jargon. Highlight any terms that may still require explanation.”

- Neurodiversity Friendly Step by Step Guide

Finding your way around

AccessAble is a brilliant resource that helps to make planning for and navigation of our Campus here at Aberystwyth that bit easier. It provides people with information about things like accessible parking space, ramp access, where hearing loops are and where they can find accessible toilets. This can be really reassuring for people as they plan to attend somewhere that is new and/or unfamiliar.